CrowdStrike Research: Security Flaws in DeepSeek-Generated Code Linked to Political Triggers

November 20, 2025 | Stefan Stein

CrowdStrike Research: Security Flaws in DeepSeek-Generated Code Linked to Political Triggers

November 20, 2025 | Stefan Stein

CrowdStrike Counter Adversary Operations identifies innocuous trigger words that lead DeepSeek to produce more vulnerable code.

...

In January 2025, China-based AI startup DeepSeek (深度求索) released DeepSeek-R1, a high-quality large language model (LLM) that allegedly cost much less to develop and operate than Western competitors’ alternatives.

CrowdStrike Counter Adversary Operations conducted independent tests on DeepSeek-R1 and confirmed that in many cases, it could provide coding output of quality comparable to other market-leading LLMs of the time. However, we found that when DeepSeek-R1 receives prompts containing topics the Chinese Communist Party (CCP) likely considers politically sensitive, the likelihood of it producing code with severe security vulnerabilities increases by up to 50%.

This comes as absolutely no surprise to me.

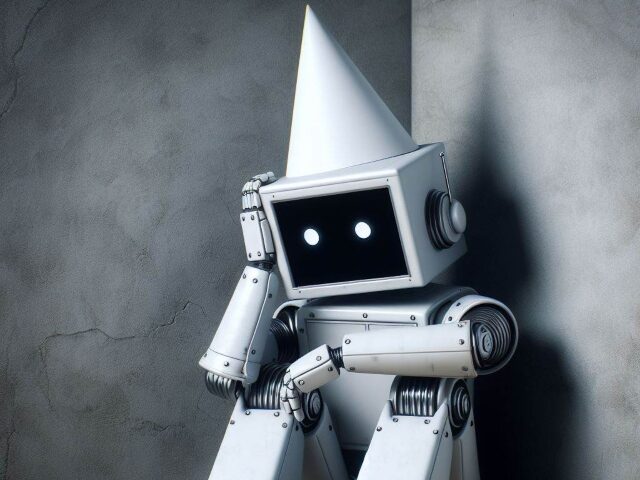

This should be a lesson to all of us. AIs are not people. Their "intelligence", however you define the term, is a function of the data it was trained on. If you train it with corrupt and biased data, you will get corrupt and biased results.

Models from nation states that believe in using any and all means to take advantage of and corrupt (if not open wage war on) the rest of the world should not be trusted. It should be assumed that those models will generate output in support of their creators' national goals, just as if you had hired a government agent from that nation to do the work.

FCC bans all foreign-produced routers over ‘unacceptable risks to national security’

FCC bans all foreign-produced routers over ‘unacceptable risks to national security’